Beyond Artificial Intelligence: What Triathlon Coaches Really Think About AI

Karen Parnell

November 25, 2025

Karen Parnell

November 25, 2025

Beyond Artificial Intelligence: What Triathlon Coaches Really Think About AI

Insights from an MSc research project on AI in Online Triathlon Coaching by Karen Parnell

Artificial intelligence is rapidly gaining ground in endurance sports. Machine-learning models are now capable of analysing physiological data, predicting fatigue, adjusting training plans, and shaping sessions in real time. From platforms like Machine Learning (ML) based Apps like Athletica.ai and TriDot to the growing use of Large Language Models (LLMs) like ChatGPT for communication support, AI is no longer experimental — it’s already woven into the training habits of thousands of athletes.

But while AI is accelerating, there has been surprisingly little research into what coaches actually think about these tools.

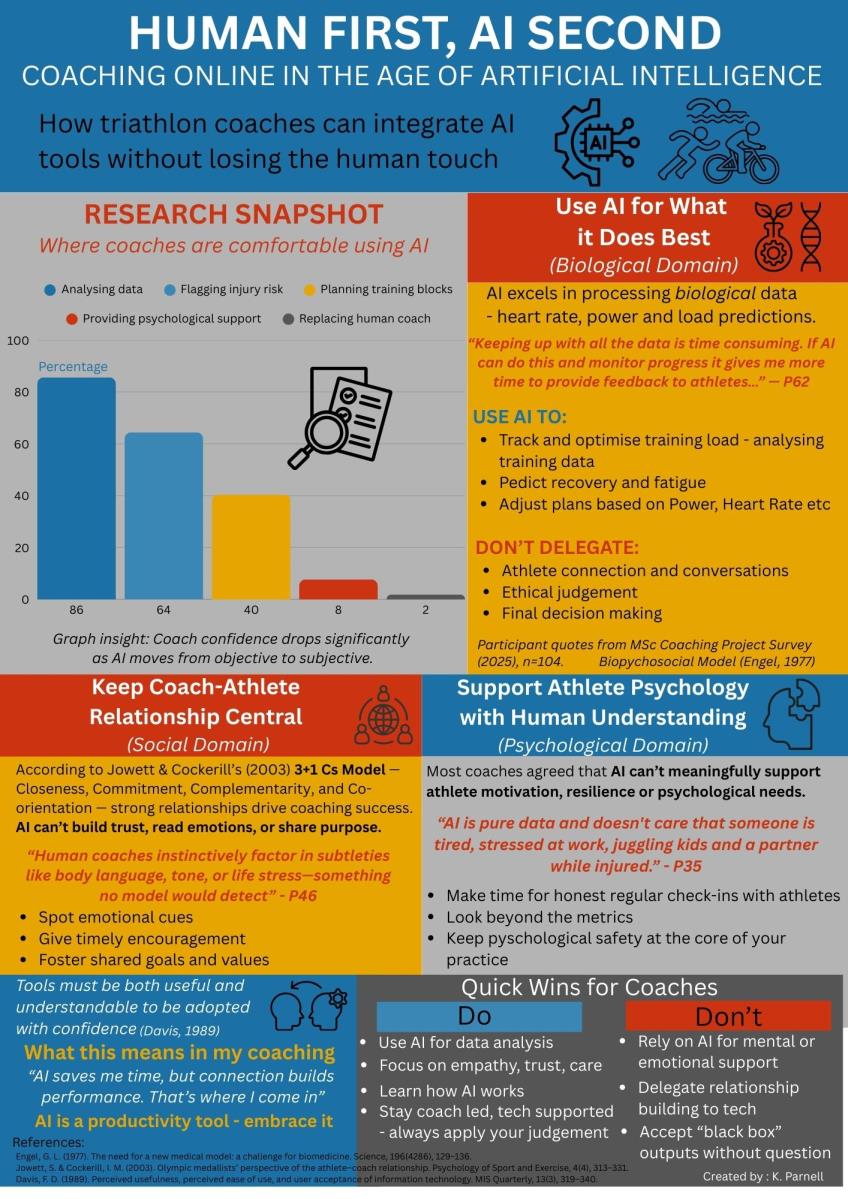

As part of my MSc in Sports Performance Coaching, I set out to fill this gap. I surveyed 104 online triathlon coaches, exploring how they currently use AI, what they see as its strengths and weaknesses, and how they believe it will shape the future of the profession.

What emerged was a clear theme:

AI is excellent at training, but it cannot coach.

If you prefer to listen to Blogs you can listen to it here:

Why Look at AI in Coaching?

AI has been used in broader sport science for years. Research shows its strengths in performance monitoring, injury prediction, and training load modelling:

- Machine learning models have predicted injuries in football using GPS and training-load data (Rossi et al., 2018).

- Recurrent neural networks have been used to estimate lactate thresholds in runners (Etxegarai et al., 2018).

- AI-driven systems have generated more stable training adaptations than static programmes in endurance runners (Nilsson et al., 2023).

- Tennis research highlights AI’s value for tactical and psychological-state feedback (Sampaio et al., 2024).

These examples reflect what Hammes et al. (2022) call the “success stories and challenges” of AI in elite sport: excellent computational power but limited nuance.

While this work is expanding, endurance coaching — especially triathlon — has not been widely studied. Coaches are working in increasingly digital environments (Bennett & Szedlak, 2023), yet their perspectives on AI are almost absent from the literature.

My research aimed to address that.

How the Study Was Conducted

- Mixed-methods online survey

- 104 triathlon coaches who coach primarily online

- Input from TrainingPeaks, TrainingTilt, Final Surge and Athletica.ai

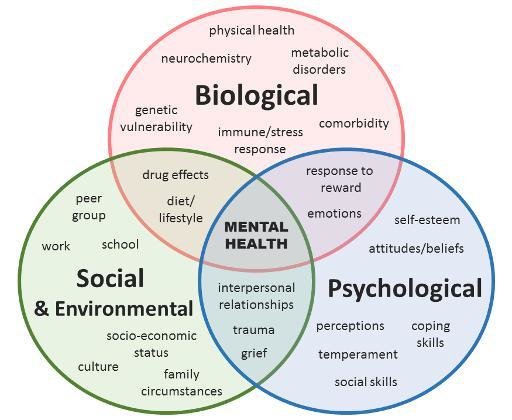

- Quantitative analysis and thematic analysis guided by the Biopsychosocial Model (Engel, 1977)

Coaches were asked about:

- Their current use of platforms and AI features

- Their expectations about how AI will develop

- Which tasks they would or would not trust AI with

- Their views on AI’s ability to support athlete wellbeing

- Their confidence, concerns, and readiness

The biopsychosocial lens proved especially useful because it allowed findings to be analysed across biological, psychological, and social domains — a structure that mirrors how coaches actually think and work.

THE BIOPSYCHOSOCIAL MODEL OF MENTAL HEALTH (ENGEL, 1977)

Key Findings

1. Coaches Strongly Support AI in the Biological Domain

This was the clearest area of agreement. Coaches saw AI as a powerful assistant for:

- Analysing large volumes of training data

- Spotting trends or anomalies they might otherwise miss

- Predicting fatigue or injury risk

- Adjusting sessions based on readiness

- Summarising key metrics

One coach described AI as a “strategic partner”, echoing both the research of Rothschild et al. (2024) on AI-based recovery prediction and the adaptive systems reviewed by Rajšp and Fister (2020).

A majority (86%) wanted AI to flag issues such as stagnation, overtraining or injury risk — something widely explored in machine-learning literature but rarely implemented well in coaching platforms (Van Eetvelde et al., 2021).

The pattern mirrors what Bodemer (2023) calls AI-supported individualisation: powerful for modelling physical stress, but limited outside that domain.

2. AI Fails to Meet Coaches’ Psychological or Social Needs

This finding aligns closely with research from Ghezelseflou & Choori (2023), who noted that athletes using AI-driven coaching systems missed empathy, trust, and conversational nuance.

Coaches in my study were unequivocal:

- AI cannot provide emotional support

- AI cannot read tone or “what’s not being said”

- AI cannot respond to stress, crisis, or life context

- AI cannot build rapport or trust

- AI cannot motivate or challenge an athlete appropriately

One coach put it plainly:

“AI is training, not coaching.” (P16)

This reinforces what Jowett & Cockerill (2003) and Thelwell et al. (2008) have long argued: that the coach–athlete relationship is central to athlete development — and deeply human.

3. Coaches Want a Hybrid Future

Most coaches pictured a future where AI takes care of automation, while coaches focus on connection.

They wanted AI to:

- Streamline analysis

- Provide adaptive recommendations

- Suggest session tweaks

- Produce draft communication

- Reduce admin

But they did not want AI to:

- Take over the full plan

- Make decisions unilaterally

- Communicate with athletes independently

- Replace human judgment

This matches the “assistant coach” model described by Muijlwijk et al. (2024) in their work on human–AI interaction in running.

4. Over-Automation Creates Ethical and Professional Concerns

Some coaches raised concerns around:

- Loss of professional identity

- Accountability for AI-generated decisions

- Transparency, especially in ML “black box” models (see Barger, 2025)

- Data privacy and their Interlectual Property

- Athletes over-trusting automation

A number of coaches expressed worry that if platforms aim to replace coaching, they risk undermining the depth and quality of athlete support.

This echoes the warnings from Sperlich et al. (2023) about AI’s socio-ethical risks in sport.

5. Coaches Vary in AI Readiness

Some felt confident adopting AI tools; others admitted they lacked technical understanding. This reflects the Technology Acceptance Model (Davis, 1989), in which perceived ease of use and perceived usefulness are key drivers.

Coaches will need support — and developers must provide clear guidance, transparent models, and meaningful onboarding if they want adoption to grow.

What This Means for Coaches

AI isn’t replacing coaching. Instead, it is redefining where coaches add the most value.

- AI enhances the science

- Coaches enhance the art

The most future-ready coaches will be those who can:

- Use AI confidently for analysis and planning

- Retain ownership of the relational and psychological work

- Understand AI’s limitations

- Advocate for athlete wellbeing when automation goes too far

The best coaching in the coming years will be more human, not less — because AI will take on the background processing that often distracts from genuine connection.

What This Means for Software Providers

The message for developers is very clear.

Platforms that succeed will be those that:

- Strengthen coach–athlete communication

- Give coaches control and override authority

- Provide transparent, explainable AI and the models it uses e.g. periodisation

- Offer flexible levels of automation

- Support training rather than replace coaches

- Collaborate with coaches to develop AI based training platforms

This aligns closely with Terblanche’s (2020) AI design framework: AI tools should be codesigned with coaches, not imposed upon them.

AI should extend human coaching capability, not attempt to replicate it.

Conclusion

AI is already reshaping endurance sport — but not in the way many predicted. Triathlon coaches are not worried about losing their jobs. They are, however, deeply protective of the relational dimension of coaching, which they see as irreplaceable.

AI’s strength lies in the biological domain: processing data, detecting patterns, and enhancing precision. Its weakness lies in anything requiring empathy, trust, interpretation or emotion.

The future, as coaches see it, is hybrid:

AI-supported, human-led coaching.

As one participant said:

“The best coaches balance science and empathy — AI gives us more of the first, so we can focus on the second.” (P22)

This balance — between intelligence and intuition — is where the next evolution of endurance coaching will be found.

You can read the full research project on ResearchGate.

Karen Parnell is a Level 3 British Triathlon and IRONMAN Certified Coach, 8020 Endurance Certified Coach, WOWSA Level 3 open water swimming coach, and NASM Personal Trainer and Sports Technology Writer.

Karen has a postgraduate MSc in Sports Performance Coaching from the University of Stirling.

Need a training plan? I have plans on TrainingPeaks and FinalSurge:

I also coach a very small number of athletes one-to-one for all triathlon and multi-sport distances, open water swimming events, and running races. Email me for details and availability. Karen.parnell@chilitri.com

FAQ: Artificial Intelligence in Online Triathlon Coaching

For Coaches

Will AI replace triathlon coaches?

No. My research showed overwhelming agreement that AI cannot replace the human elements of coaching — particularly empathy, motivation, communication, and contextual understanding. Coaches see AI as a tool for training, not for coaching.

What tasks are coaches most comfortable handing over to AI?

Coaches supported AI in the biological domain, such as:

- analysing training data

- identifying fatigue or injury risk

- spotting trends

- drafting session adjustments

- summarising metrics

- automating admin

These tasks map neatly onto existing AI capabilities observed in the literature (e.g., Rossi et al., 2018; Nilsson et al., 2023).

Are there areas where coaches do not want AI involved?

Yes. My study showed consistent resistance to AI taking over anything relational or psychological:

- emotional support

- motivation

- athlete wellbeing conversations

- coach–athlete communication

- crisis or stress responses

This reflects findings by Ghezelseflou & Choori (2023) and Jowett & Cockerill (2003) about the importance of the coach–athlete bond.

Does AI improve athlete training outcomes?

AI can enhance training precision — for example through personalised adjustments or better load modelling — but it does not replace reflective conversations, psychological readiness, or context. Most coaches felt AI improves the science of training, but not the art.

How can I use AI without losing my coaching identity?

Use AI as:

- an analyst

- a monitoring tool

- a second pair of eyes

- an early warning system

- an administrative assistant

Avoid using AI as:

- your voice

- your intuition

- your communicator

- your decision-maker

This approach matches the hybrid “AI-assisted, human-led” model your study identified.

Do I need technical knowledge to use AI well?

Not initially. The study participants varied widely in technical ability, but most believed that understanding the limitations of AI is more important than understanding its algorithms. Transparency from platforms (e.g., explaining models, assumptions, periodisation logic) will help coaches adopt AI successfully.

Will athletes trust AI-generated decisions?

My study suggests trust depends on the coach. Athletes rely on you to interpret data and protect their wellbeing. If AI gives a recommendation, athletes want to know their coach has reviewed it — not that a system is operating independently.

For Platform Providers

What AI features do coaches actually want?

My study showed coaches consistently want:

- data pattern recognition

- early warning fatigue/injury flags

- automated plan adjustments (coach-overseen)

- session drafting

- integrated readiness analysis

- admin automation

These are strongly aligned with existing ML literature (e.g., Etxegarai et al., 2018; Rajšp & Fister, 2020; Rothschild et al., 2024).

What features should platforms avoid?

Coaches were highly resistant to:

- full automation of training plans

- AI communicating directly with athletes without oversight

- systems that override coach judgment

- opaque “black box” decisions

- attempts to replace the coach entirely

This aligns with caution noted by Sperlich et al. (2023) and Barger (2025) regarding explainability and working alliances.

What ethical concerns matter most to coaches?

My survey highlighted five major concerns:

- Loss of professional identity

- Unclear accountability for AI-generated plans

- Data privacy and IP ownership

- Black-box decision-making (limited explainability)

- Athletes over-trusting automated decisions

Ethical design principles from Terblanche (2020) are particularly relevant: co-design, transparency, control, and contextualisation.

How much transparency do coaches expect?

A lot. Coaches want to know:

- what data the AI is using

- what the model is assuming

- why a session was changed

- how the system interprets readiness or fatigue

- what periodisation logic is being applied

Opaque systems reduce trust — a finding echoed across your survey responses and in AI-sport literature.

Should platforms aim to replace coaches with AI?

No. This is the fastest route to rejection.

Coaches do not want replacement models and will resist platforms that present themselves this way. Your study found that the most acceptable vision is a human-led, AI-supported system — the “assistant coach” model also described by Muijlwijk et al. (2024).

How can platforms best support adoption?

The study participants indicated that platforms should:

- educate coaches on how AI works

- build simple, clear onboarding

- allow customisable automation levels

- ensure coach override on all decisions

- reinforce the value of the human coach

AI success in endurance sport will depend on respecting the social and psychological dimensions of coaching — the elements AI cannot replicate.

References

Bennett, G., & Szedlak, C. (2023). Managing workload and athlete wellbeing in remote coaching environments.

Bodemer, D. (2023). AI-supported individualisation in sport practice: Potentials and limitations.

Barger, R. (2025). The working alliance in AI-augmented coaching: Risks, expectations, and implications.

Davis, F. (1989). Perceived usefulness, perceived ease of use, and user acceptance of information technology.

Engel, G. (1977). The biopsychosocial model and the challenge of medicine.

Etxegarai, A., et al. (2018). Lactate threshold estimation in runners using recurrent neural networks.

Ghezelseflou, A., & Choori, S. (2023). Athlete experiences with AI-driven coaching systems: Trust, empathy and interaction.

Hammes, R. et al. (2022). Artificial intelligence in elite sport: Success stories and challenges.

Jowett, S., & Cockerill, I. (2003). Understanding the coach–athlete relationship.

Muijlwijk, R. et al. (2024). Human–AI interaction in running: The assistant-coach model.

Nilsson, M. et al. (2023). Adaptive AI training systems versus static programmes in endurance runners.

Rajšp, A., & Fister, I. (2020). Smart training systems using machine learning in sport.

Rossi, A. et al. (2018). Injury prediction in football using machine learning and training-load data.

Rothschild, J. et al. (2024). AI-based recovery prediction for endurance athletes.

Sampaio, J. et al. (2024). AI-driven analysis of psychological and tactical factors in tennis.

Sperlich, B. et al. (2023). Socio-ethical risks of AI in sport performance environments.

Terblanche, N. (2020). The DIAC framework for AI design in coaching contexts: Co-design, transparency and contextual awareness.

Van Eetvelde, H. et al. (2021). Limitations of machine learning injury prediction in team and endurance sports.